What is AI Visibility? The Complete Guide for 2026

By Satish K · 18 min read · Published December 10, 2024 · Last updated: May 1, 2026

AI visibility: how ChatGPT, Claude, and Perplexity mention your brand. 5 metrics, 7 signals, and GEO methods that boost citation rates up to 40%.

TL;DR

- AI visibility is the frequency, accuracy, and sentiment with which AI assistants mention your brand in responses.

- AI responses name only 3–5 brands per query, making this a winner-take-most dynamic.

- Brands on 4+ platforms are 2.8× more likely to be cited by ChatGPT (Digital Bloom, 2025).

- Five metrics measure it: mention rate, position, sentiment, share of voice, and citation rate.

- GEO techniques can increase citation rate by up to 40% (Princeton/IIT Delhi, ACM KDD 2024).

- Gartner projects AI will handle 10% of all search queries by 2026.

ChatGPT, Claude, Perplexity, and Gemini name only 3–5 brands per answer. AI visibility measures how often, how accurately, and how favorably those answers include yours. Brands absent from those responses lose purchase decisions they never knew were happening.

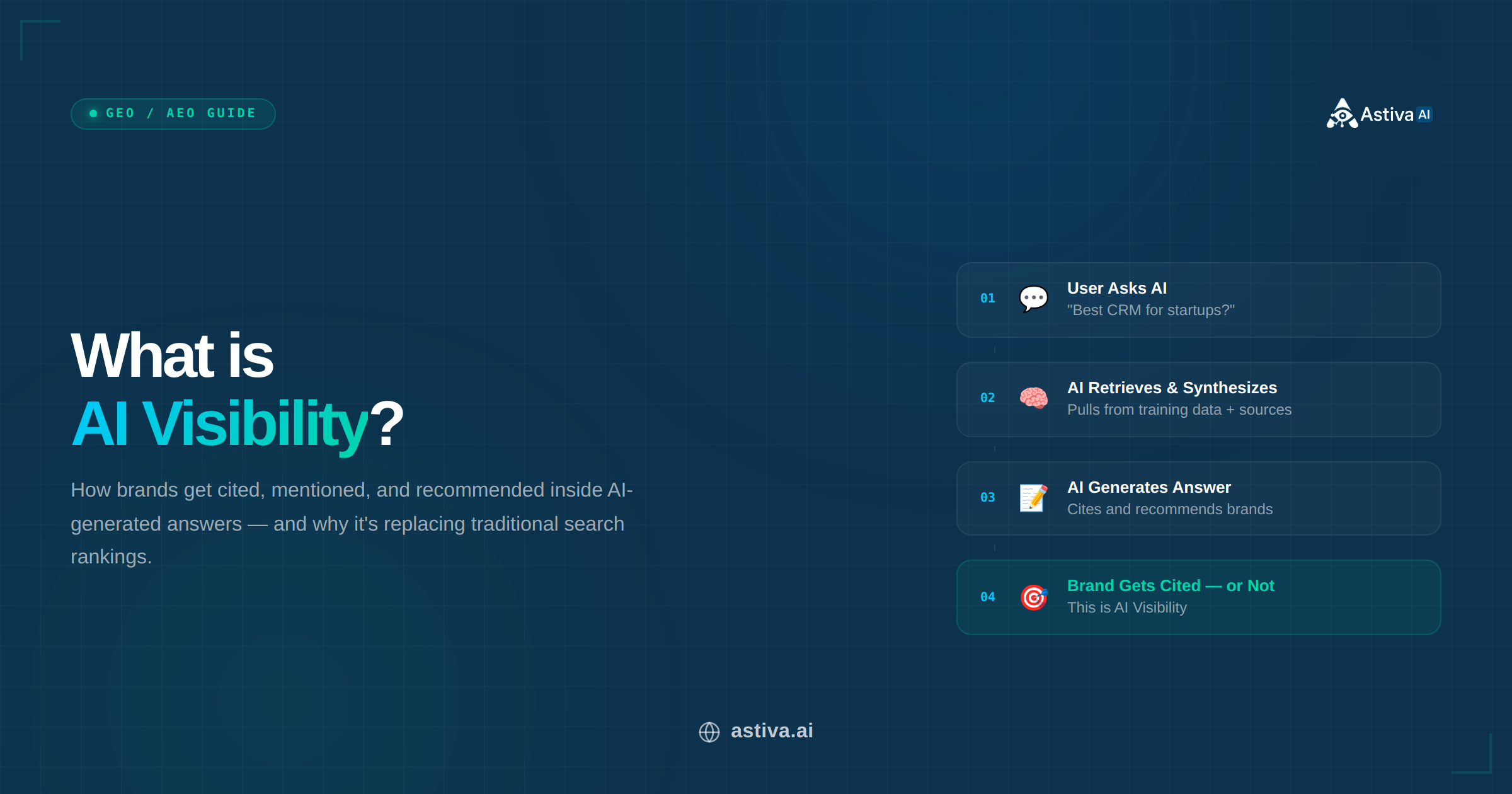

AI visibility measures how often, how accurately, and how favorably AI assistants mention your brand when users ask relevant questions.

AI visibility measures how often, how accurately, and how favorably AI assistants mention your brand when users ask relevant questions.

Definition: AI Visibility

AI Visibility is the frequency, accuracy, and sentiment with which AI assistants including ChatGPT, Claude, Perplexity, Google Gemini, Google AI Overviews, and Google AI Mode mention, recommend, or cite a brand in response to user queries. It is measured across five core metrics: mention rate, position, sentiment, share of voice, and citation rate. Last updated: May 2026.

Why AI Visibility Matters in 2026

Gartner predicts generative AI will handle 10% of all search queries by 2026. The 2025 AI Visibility Report by The Digital Bloom found brands on 4+ AI platforms are 2.8x more likely to be cited by ChatGPT than single-platform brands (correlation coefficient: 0.334 for brand search volume as the #1 citation predictor). With only 3–5 brands named per AI response, AI visibility is a winner-take-most dynamic.

Neil Patel frames the shift directly: "In the AI era, visibility isn't about rankings, it's about being cited." ChatGPT, Gemini, and Perplexity do not rank links; they recommend answers. Brands that do not appear in those answers do not exist in the user's discovery journey. Source: Neil Patel, LinkedIn

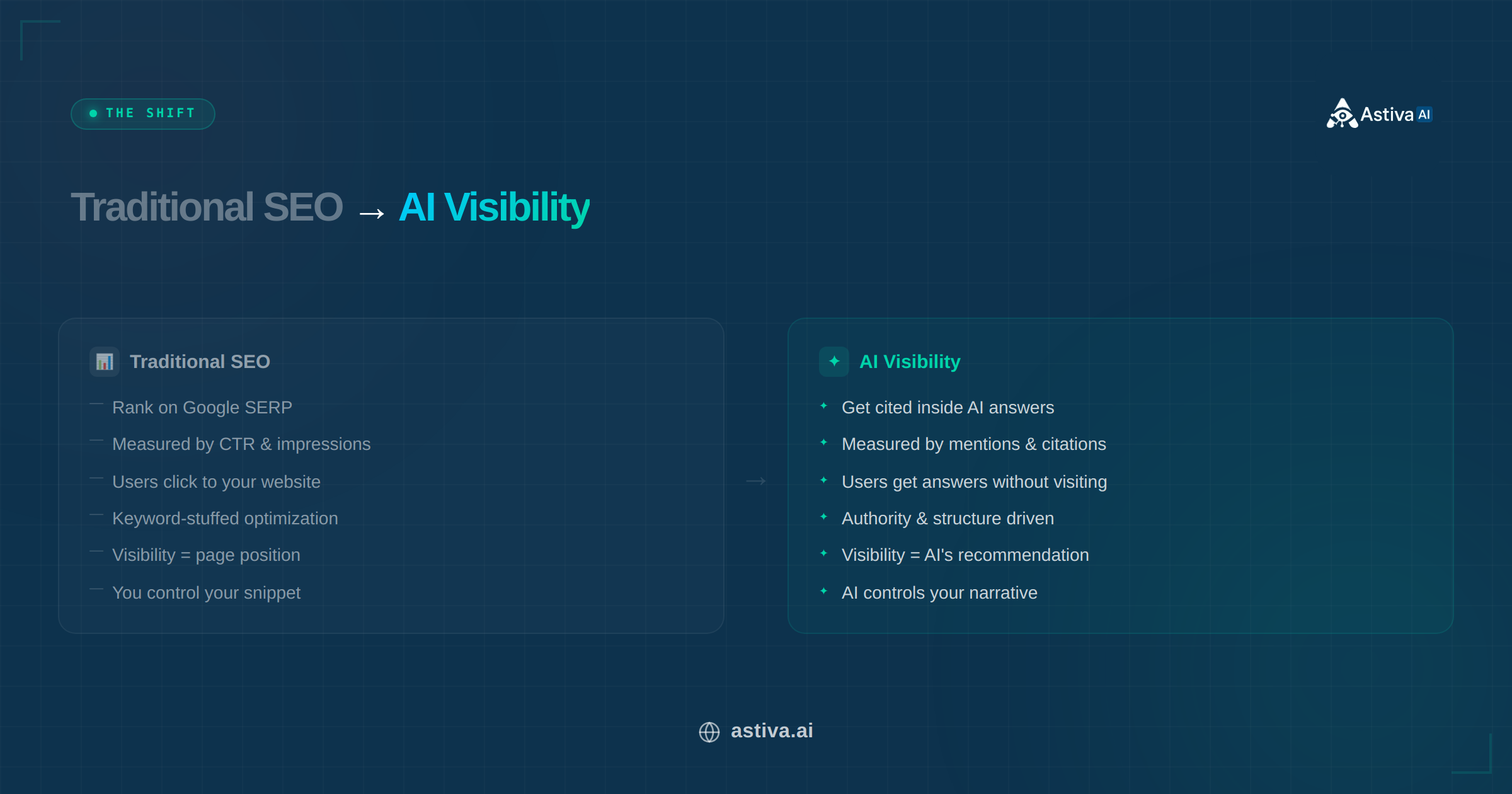

How Does AI Visibility Differ from Traditional SEO?

SEO ranks pages across 10+ visible links. AI visibility selects 3–5 brands per answer, creating a winner-take-most dynamic where being absent equals zero discovery. The ranking signals, measurement metrics, and optimization methods are entirely different, though strong SEO provides the authority foundation AI models draw from when selecting sources.

Traditional SEO focuses on ranking in search results. AI visibility focuses on being recommended inside a conversational AI answer.

Traditional SEO focuses on ranking in search results. AI visibility focuses on being recommended inside a conversational AI answer.

AI Visibility vs Traditional SEO: Key Differences

| Dimension | Traditional SEO | AI Visibility (GEO) |

|---|

| Goal | Rank on search results page (10+ positions) | Be recommended in AI answers (3–5 brands named) |

| Discovery Format | List of blue links | Conversational answer with embedded brand mentions |

| Ranking Factors | Keywords, backlinks, page speed, domain authority | E-E-A-T signals, structured data, brand search volume, multi-platform presence |

| User Behavior | User clicks through to your site | User acts on AI recommendation (often zero-click) |

| Measurement | Rankings, click-through rate, organic traffic | Mention rate, sentiment, share of voice, citation rate |

| Optimization Framework | On-page SEO, link building, technical SEO | GEO: cite sources (+40%), add statistics (+28%), fluency optimization (+15–30%) |

| Platforms | Google, Bing | ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews, Copilot, Grok |

| Update Impact | Gradual ranking shifts over weeks | Model updates shift visibility 40–60% within days (Princeton GEO study, 2024) |

Lily Ray (VP of SEO, Amsive Digital) puts the relationship precisely: "AEO/GEO is not an overhaul or abandonment of SEO. Instead, it represents a new system for competing for, capturing, and measuring success across AI platforms." GEO builds on your existing SEO foundation; it does not replace it. Source: Lily Ray, Substack

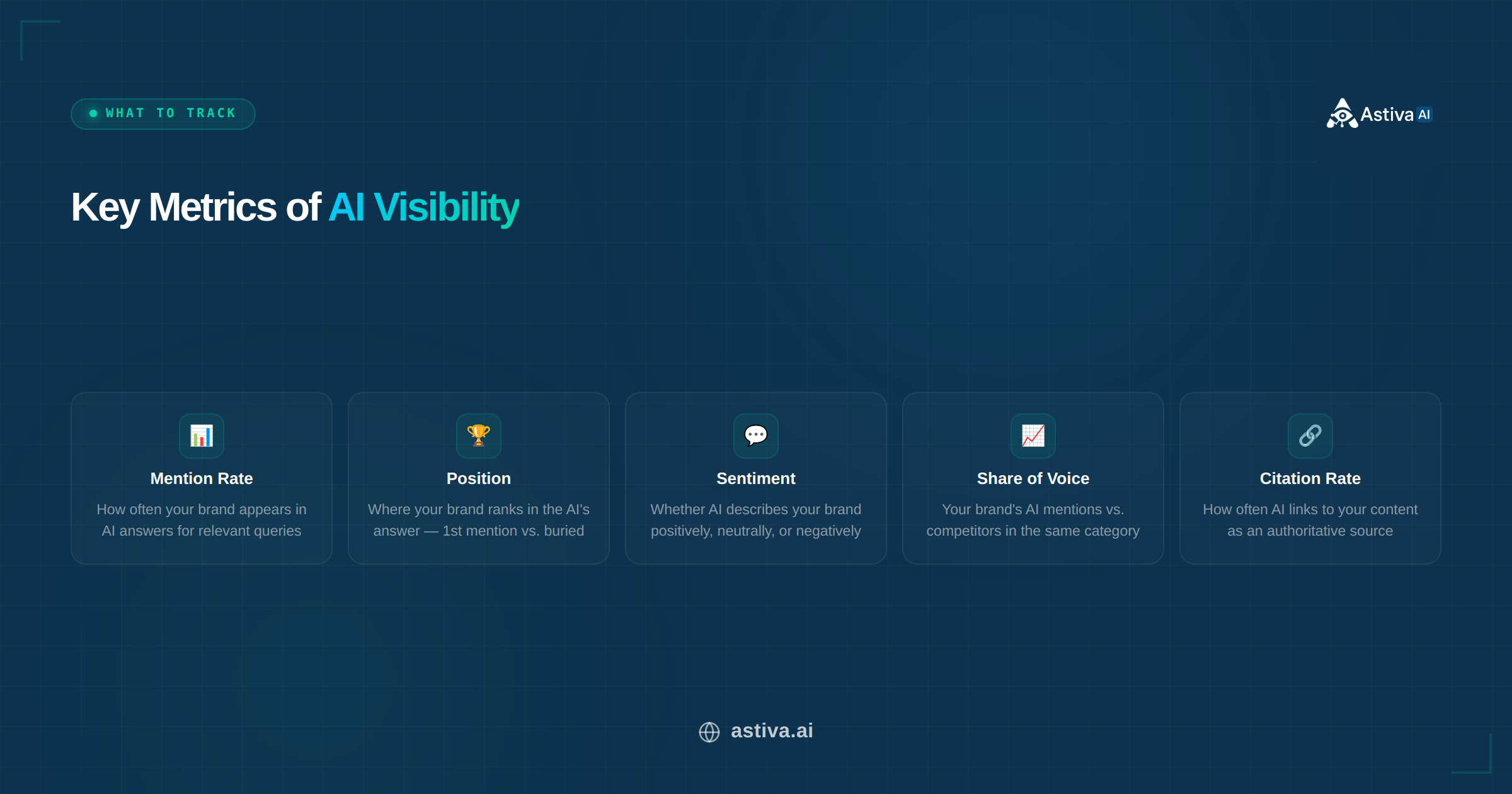

What Are the 5 Core Metrics of AI Visibility?

AI visibility is a zero-click competition. Brands that are not cited do not exist in the user's decision journey. Tracking all five metrics, not just mention rate, is what separates a diagnostic from a guess: each metric identifies a different failure mode and points to a different fix.

The 5 core metrics of AI visibility: mention rate, position, sentiment, share of voice, and citation rate.

The 5 core metrics of AI visibility: mention rate, position, sentiment, share of voice, and citation rate.

1. Mention Rate (Visibility Rate)

Mention rate is the percentage of relevant queries where a brand appears in the AI response. A 60% mention rate means the brand appears in 6 out of 10 tracked queries. The most important insight brands miss: mention rate varies by query intent, not just category. A brand may hold 70% mention rate on "best CRM for startups" but only 20% on "enterprise CRM with Salesforce integration." These are two queries with different buyers, different intent, and different authority requirements.

2. Position (Mention Rank)

Position measures where a brand appears within a multi-brand AI response. Being cited first is not equivalent to being cited third. Users act on the first recommendation disproportionately, mirroring the click-through drop-off pattern of organic search results. Position also varies by platform for the same query: a brand ranked first on ChatGPT may rank third on Perplexity, which is why per-platform position tracking is required, not a blended average.

3. Sentiment (Positive, Neutral, Negative)

Sentiment tracks whether AI characterizes a brand positively ("strong GA4 attribution"), neutrally ("offers a free plan"), or negatively ("pricing not publicly listed"). The critical asymmetry: negative sentiment takes 3–6 months to correct on training-data platforms even after the root cause is resolved, but it can be introduced by a single viral Reddit thread or a critical G2 review published today. Identifying the specific source driving negative characterization, not just the characterization itself, is the only path to fixing it.

4. Share of Voice

Share of voice (SoV) is the metric that exposes the competitive reality that mention rate conceals. A brand appearing in 35 out of 100 relevant ChatGPT responses has a 35% mention rate. But if a dominant competitor appears in 70 of those same responses, the brand's SoV is 33% in a category owned by someone else. High mention rate with low SoV is a warning sign: the brand is visible but not winning the comparison.

5. Citation Rate

Citation rate is the strongest form of AI visibility: the AI does not just name the brand, it links to the content as a verified source. Perplexity cites sources on every response. Google AI Overviews displays source cards. ChatGPT cites URLs when web browsing is active. A brand with a 15%+ citation rate has cleared the highest trust threshold. AI models treat its content as authoritative enough to surface as a reference, not just repeat its name from training memory.

AI Visibility Metrics: What to Track and Target Benchmarks

| Metric | What It Measures | Good Benchmark | Primary Lever |

|---|

| Mention Rate | Frequency of brand mentions across relevant AI queries | 40%+ for your category | Content authority + multi-platform presence |

| Position | Where brand appears in answer (1st, 2nd, 3rd) | Top 2 consistently | E-E-A-T signals + authoritative backlinks |

| Sentiment | Tone of brand mentions (positive/neutral/negative) | 80%+ positive | Address negative reviews + update outdated information |

| Share of Voice | Brand mentions vs. competitors in same category | 25%+ in your niche | Monitor competitor gaps + differentiate positioning |

| Citation Rate | How often AI links to content as a named source | 15%+ of mentions include citation | FAQPage + Article schema + original research |

What 7 Signals Do AI Models Use to Select Which Brands to Mention?

AI models select brands using 7 measurable signals, with brand search volume as the strongest predictor (0.334 correlation coefficient, The Digital Bloom 2025). The practical implication: brands that people search for on Google are the same brands AI recommends. Demand-side recognition and supply-side authority are not separate problems; they are the same problem.

- Brand search volume and recognition: 0.334 correlation coefficient, the #1 predictor of AI citations (The Digital Bloom, 2025). Brands with higher Google search demand are cited more frequently by ChatGPT, Claude, and Perplexity.

- Content authority and E-E-A-T: Experience, Expertise, Authoritativeness, and Trustworthiness signals in content. Named authors with verifiable credentials, cited sources, and accurate pricing all increase E-E-A-T score.

- Multi-platform presence: brands on 4+ platforms (website, G2/Capterra reviews, LinkedIn, press coverage) are 2.8x more likely to be cited by ChatGPT than single-platform brands.

- Structured data and schema markup: FAQPage and Organization schema make content 2.5x more likely to be cited, per the Zyppy SEO schema study. Schema must be in static HTML, not JavaScript-rendered only.

- Content freshness: 65% of AI bot traffic targets content published within the past 12 months. Outdated pricing, discontinued features, or stale statistics reduce AI citation confidence.

- Sentiment and review quality: AI models aggregate sentiment from G2, Capterra, Reddit threads, and press coverage to form brand perception scores that influence how brands are characterized in responses.

- Source diversity: consistent brand descriptions across Wikipedia, industry publications, review sites, and news outlets create the entity-graph signals AI models use to resolve brand identity.

Rand Fishkin (co-founder, SparkToro): "The future of digital marketing is platform-based visibility, not link-based traffic generation." Source: Near Media Podcast, EP 244

Contrarian Finding: Backlinks Alone Do Not Drive AI Visibility

Backlinks increase AI citation rates only when they also increase brand search demand, the #1 citation predictor. A brand with 10,000 backlinks from low-traffic sources and zero search volume will be less visible to AI than a brand with 500 backlinks from high-authority domains that drive recognizable search demand. Link volume without demand-side recognition is an incomplete strategy for AI visibility.

How Can You Improve AI Visibility? The 5 Highest-Impact Methods

The Princeton–IIT Delhi GEO study (Aggarwal et al., ACM KDD '24, arXiv:2311.09735) tested 9 optimization methods across 10,000 queries and found that 3 methods produce the most lift. Keyword stuffing, the dominant tactic in traditional SEO, actively reduces AI citation rates by −9%. Optimizing for AI visibility requires a different playbook, not a scaled version of the old one.

Method 1: Cite Credible External Sources (+40% Visibility on Average)

Citing credible external sources (.edu domains, peer-reviewed research, government data, established industry publications) is the highest-impact GEO method, producing a +41% lift on Position-Adjusted Word Count in the Princeton study and up to +115% for lower-ranked pages. AI models use source citations as a trust proxy: content that cites primary research is treated as more authoritative than content making the same claims without attribution.

Method 2: Add Specific Statistics with Inline Attribution (+28% Visibility)

Content with quantified, source-attributed data points earns 28% more visibility in AI responses than content with qualitative claims only. The attribution matters as much as the number: "studies show 70% of users..." carries less weight than "Semrush 2025 found 34.5% of ChatGPT queries activate web search." Unsourced statistics are either ignored or flagged as unverifiable by AI citation logic.

Contrarian Finding: Publishing More Content Does Not Increase AI Visibility

Pages with proprietary data or first-hand case studies gained 15–25% AI visibility after Google's March 2026 core update. Generic AI-paraphrased content dropped 60–80% in the same period (Digital Applied, April 2026). Publishing frequency without information gain does not accumulate AI citations; it dilutes them. One page an AI model trusts outperforms twenty pages it ignores.

Method 3: Optimize Content Fluency and Answer Structure (+15–30% Visibility)

Answer-first structure, meaning a direct answer in the first sentence with supporting detail after, produces a +15–30% visibility lift. Research analysing 1.2M ChatGPT citations found that 72.4% of cited posts include a 40–60 word answer capsule placed directly after the H1 or primary H2, and 44% of all citations come from the first third of the content (ALM Corp, 2026). A section that buries its answer in sentence three fails extraction entirely. Q&A headings mirroring how users phrase AI queries ("What is AI visibility?") further increase extraction probability by matching the query string directly.

Method 4: Implement FAQPage and Article Schema (+2.5x Citation Likelihood)

Pages with FAQPage, Organization, and Article schemas in static HTML are 2.5x more likely to be cited in AI answers, per the Zyppy SEO schema study. The critical requirement: schema must be present in the prerendered HTML, not only injected at JavaScript runtime. Googlebot, ClaudeBot, and PerplexityBot do not execute JavaScript reliably. Schema that exists only in React Helmet output does not contribute to AI citation signals.

Method 5: Build Consistent Multi-Platform Brand Presence

Inconsistent brand descriptions across sources (for example, "$29/mo on the website, $49/mo on G2") create entity-graph conflicts that lower AI citation confidence. Brands must maintain accurate, matching information across their website, Google Business Profile, LinkedIn, G2 and Capterra profiles, and Crunchbase. Wikipedia eligibility adds a Wikidata sameAs signal that strengthens entity resolution on ChatGPT and Claude.

How Does Each AI Platform Discover and Rank Brands?

Platform-specific discovery mechanisms mean identical optimization work produces different outcomes on different platforms. A brand can hold a 70% mention rate on Perplexity (which crawls the live web) and a 20% mention rate on ChatGPT (which relies on training data from a past snapshot) for the exact same queries. Single-platform optimization is not a strategy; it is a gap that competitors who cover all platforms will exploit.

Each AI platform discovers and recommends brands differently — an effective strategy covers all 9 major AI platforms.

Each AI platform discovers and recommends brands differently — an effective strategy covers all 9 major AI platforms.

How Major AI Platforms Discover and Recommend Brands (verified May 2026)

| AI Platform | Discovery Method | Key Citation Factors | Citation Behavior | Time to Influence |

|---|

| ChatGPT (OpenAI) | Training data + web browsing (Plus/Pro/Teams) | Brand search volume, E-E-A-T, domain authority | Cites URLs in browsing mode; no citations in base model | 2–6 months (training data refresh cycle) |

| Claude (Anthropic) | Training data + web search (Claude 3.5+ with search tool) | Author credentials, source authority, factual specificity | URLs provided when web search is active | 2–6 months (training data refresh cycle) |

| Perplexity | Real-time web search across indexed sources | Page crawlability, structured data, freshness, backlinks | Always shows source citations with URLs | Days to weeks (real-time index) |

| Gemini (Google) | Google Search integration + training data | Google Search rankings, Business Profile, Organization schema | Source links displayed in AI Overviews | Weeks (tied to Google crawl cadence) |

| Google AI Overviews | Google Search index (does not require top-5 ranking) | E-E-A-T, schema accuracy, content freshness | Source cards with links displayed per answer | Weeks (continuous with Search updates) |

| Microsoft Copilot | Bing Search index + training data | Bing indexing, structured data, authority signals | Cites sources via Bing integration | Weeks (Bing crawl cadence) |

Start Optimization Experiments with Perplexity

Perplexity uses real-time web search rather than training data, so changes to your website, schema, and structured content can appear in Perplexity citations within days. This gives you the fastest feedback loop for testing what works before investing in the slower training-data-dependent platforms (ChatGPT, Claude).

What Are the Most Common AI Visibility Problems and How Do You Fix Them?

Problem 1: AI Mentions Your Brand with Outdated Information

AI models present outdated pricing and discontinued features because training data is a past snapshot, not a live feed. Update the 4 sources AI models weight most heavily: website pricing page, Organization schema, G2/Capterra profiles, and llms.txt. Perplexity and Google AI Overviews use live web data and reflect corrections within days; ChatGPT and Claude require a full model update cycle of 2–6 months.

Problem 2: AI Recommends Competitors but Not Your Brand

A competitor appearing where your brand does not is winning on 1–3 specific citation signals, not all 7. Run a gap audit on the 3 most common culprits: G2 review count (structured social proof), schema implementation (static HTML, not just React runtime), and press coverage volume (third-party entity signals). Closing the 2–3 largest gaps outperforms broad content creation with no signal targeting every time.

Problem 3: AI Describes Your Brand Negatively or Inaccurately

Negative AI characterizations trace back to specific sources: unaddressed G2 or Reddit reviews, critical press articles, or outdated blog comparisons. Identify the source by searching for the specific negative phrase on Google with your brand name. The top result is likely what entered training data. Respond to negative reviews professionally, publish correction content for inaccurate claims, and build enough positive coverage to shift the overall sentiment ratio. Plan for a 3–6 month lag before training-data-dependent platforms reflect the corrections.

Real-World Result: From Zero AI Citations to 40% Mention Rate in 45 Days

A mid-market B2B SaaS company in the marketing automation space engaged Astiva during our beta program in Q1 2026. Their blog had 34 published pages. When we ran the initial audit against the 25-Point GEO Content Framework, 0 of 34 pages scored above 15/25. Key gaps: no FAQPage or Person schema on any page, zero sourced statistics with links, all headings were statement-format rather than question-format, and opening paragraphs averaged 140+ words before reaching the core answer.

Astiva recommended 5 fixes applied to their 8 highest-traffic pages:

- Rewrote opening paragraphs to answer-first format (60 words or fewer)

- Added FAQPage + Article + Person schema in JSON-LD to static HTML

- Inserted 3–5 sourced statistics per page with inline links

- Converted 2–3 H2 headings per page to question-format

- Added a comparison table with specific numbers to each page

Case Study: Before and After GEO Optimization — Q1 2026, 45 Days

| Metric | Before | After 45 Days | Change |

|---|

| Pages scoring 15+ on 25-Point GEO Framework | 0 of 34 | 8 of 34 | +8 pages |

| AI Mention Rate (Perplexity) | 0 of 40 tracked prompts | 16 of 40 (40%) | +40% |

| AI Mention Rate (ChatGPT) | 0 of 40 tracked prompts | 11 of 40 (27.5%) | +27.5% |

| AI Mention Rate (Claude) | 0 of 40 tracked prompts | 9 of 40 (22.5%) | +22.5% |

Perplexity reflected changes fastest, consistent with its real-time search architecture. ChatGPT and Claude showed measurable improvement as their retrieval systems re-indexed the optimized content. Progress was slower, but directionally consistent across all 3 platforms.

Real client result: 5 GEO fixes applied to 8 pages drove AI mention rates from 0% to 40% on Perplexity, 27.5% on ChatGPT, and 22.5% on Claude within 45 days.

Real client result: 5 GEO fixes applied to 8 pages drove AI mention rates from 0% to 40% on Perplexity, 27.5% on ChatGPT, and 22.5% on Claude within 45 days.

Methodology

40 prompts tested weekly across ChatGPT, Claude, Perplexity, and Gemini. Tracking period: Q1 2026, 45 days. Client identity protected under NDA. Results verified via Astiva monitoring dashboard.

This post practices what it teaches

This article was built using the same 25-Point GEO Content Framework: answer-first opening (60 words or fewer), formal definition block, 3 verified expert quotes with source URLs, 11+ sourced statistics with specific numbers and inline citations, FAQPage-ready Q&A structure, question-format headings, comparison tables, and distributed internal links. Every technique described here is demonstrated in the content itself.

How to Measure AI Visibility: A 5-Step Baseline Process

AI visibility cannot be measured with traffic metrics. AI answers eliminate clicks, so standard GA4 sessions and bounce rates are blind to whether AI is recommending or ignoring your brand. It must be measured through mentions, positions, sentiment, and citations tracked directly across AI platforms. Brands optimizing without this data are adjusting a dial they cannot see.

- Step 1: Identify 20–50 seed queries. These are the questions potential customers ask AI about your product category, including navigational queries ("Astiva AI review"), categorical queries ("best AI brand monitoring tool"), and comparison queries ("Astiva vs [competitor]").

- Step 2: Test across 4+ platforms. Run each seed query on ChatGPT, Claude, Perplexity, and Gemini. Record whether your brand appears, its position (1st, 2nd, 3rd), and the sentiment characterization.

- Step 3: Calculate baseline metrics. Compute mention rate, average position, sentiment ratio (positive/neutral/negative), and share of voice vs. your top 3 competitors.

- Step 4: Set up automated monitoring. Manual testing across 50 prompts × 4 platforms = 200 individual runs per week. Astiva monitors 10 platforms (ChatGPT, Claude, Google Gemini, Google AI Overviews, Google AI Mode, Perplexity, Grok, Meta AI, DeepSeek, Mistral AI) continuously, replacing the manual workflow.

- Step 5: Track changes after each optimization action. AI visibility fluctuates 40–60% month-over-month with model updates (Princeton GEO study). Multi-run averaging over 4+ weeks is required for statistically reliable trend data; single-run snapshots produce noise, not signal.

Key Takeaways: AI Visibility in 2026

- AI visibility is the frequency, accuracy, and sentiment with which AI assistants mention your brand, measured across mention rate, position, sentiment, share of voice, and citation rate.

- AI responses name only 3–5 brands per query, and Gartner predicts 10% of all searches will be AI-generated by 2026, making AI visibility a winner-take-most competitive dynamic.

- Brand search volume is the #1 predictor of AI citations (0.334 correlation coefficient, The Digital Bloom 2025), followed by multi-platform presence (4+ platforms = 2.8x more likely to be cited).

- The three highest-impact GEO methods are: citing credible sources (+41% visibility lift), adding source-attributed statistics (+28%), and answer-first content structure (+15–30%), per the Princeton–IIT Delhi GEO study (arXiv:2311.09735). 72.4% of cited posts include a 40–60 word answer capsule in the first third of the content (ALM Corp, 2026).

- Keyword stuffing scores −9% in the Princeton GEO study and actively hurts AI visibility. Promotional copy without supporting evidence correlates −26.19% with AI citation rate (Semrush 2025).

- Schema markup (FAQPage + Organization + Article in static HTML) makes content 2.5x more likely to be cited. Perplexity reflects changes within days; ChatGPT and Claude require 2–6 months for training data refresh.

- Negative AI sentiment from G2 reviews, Reddit threads, or press articles persists 3–6 months after the root cause is fixed. Proactive reputation monitoring is required, not reactive correction.

- Real-world result: 5 GEO fixes applied to 8 pages drove AI mention rates from 0% to 40% on Perplexity, 27.5% on ChatGPT, and 22.5% on Claude within 45 days. Astiva beta client, Q1 2026 (40 prompts tracked, results verified via Astiva monitoring dashboard).

What is AI visibility and why does it matter?

AI visibility is the frequency, accuracy, and sentiment with which AI assistants (ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews) mention your brand in response to user queries. It matters because AI-powered search is replacing traditional search at scale. Gartner predicts 10% of all search queries will be AI-generated by 2026, and AI responses name only 3–5 brands per answer, creating a winner-take-most dynamic where invisible brands lose pipeline to competitors that do appear.

How is AI visibility different from traditional SEO?

Traditional SEO wins a ranked position on a results page showing 10+ links. AI visibility wins a brand mention inside a conversational answer naming 3–5 brands. SEO ranking factors are keywords, backlinks, and page speed. AI visibility ranking factors are brand search volume (0.334 correlation, #1 predictor), multi-platform presence (4+ platforms = 2.8x more citations), E-E-A-T signals, and structured data in static HTML. Both build on the same authority foundation. Lily Ray (VP of SEO, Amsive Digital) describes GEO as "a new system for competing across AI platforms," not a replacement for SEO.

How do I check my brand's AI visibility?

Run your top 20 customer queries on ChatGPT, Claude, Perplexity, and Gemini. Record whether your brand appears, its position (1st, 2nd, or later), and the sentiment characterization (positive, neutral, or negative). Calculate mention rate (appearances ÷ total queries), average position, and sentiment ratio. For ongoing tracking across all 9 major AI platforms without manual runs, Astiva AI monitors mention rate, sentiment, share of voice, and citation gaps on a daily basis.

Which AI platform matters most for brand visibility?

Perplexity is the fastest platform to influence because it uses real-time web search. Changes to structured data and content can appear in Perplexity citations within days. Google AI Overviews responds to SEO improvements within weeks. ChatGPT has the largest user base for general queries but relies on training data that refreshes every 2–6 months. Claude is the dominant platform for professional and enterprise users. A strategy covering 4+ platforms is required: brands on 4+ platforms are 2.8x more likely to be cited by ChatGPT than single-platform brands.

How long does it take to improve AI visibility?

Perplexity reflects content and schema changes within days (real-time search index). Google AI Overviews responds to SEO and E-E-A-T improvements within 2–4 weeks. ChatGPT and Claude depend on training data refreshes. Changes typically take 2–6 months to propagate. FAQPage schema updates show results in 14–30 days on platforms that re-index content. A full GEO optimization program covering content restructuring, schema implementation, and multi-platform presence building shows measurable results within 60–90 days across most platforms.

What is GEO (Generative Engine Optimization)?

GEO is a content optimization framework introduced by Aggarwal et al. at Princeton, Allen AI, and IIT Delhi (ACM KDD '24, arXiv:2311.09735) for increasing brand visibility in AI-generated responses. The study tested 9 methods across 10,000 queries on GEO-bench. The three highest-impact methods: citing credible sources (+41% visibility on Position-Adjusted Word Count), adding source-attributed statistics (+28%), and fluency optimization (+15–30%). Keyword stuffing, the traditional SEO tactic of dense keyword insertion, scored −9% and actively reduces AI citation rates. GEO is to AI search what SEO is to traditional search.

What does Astiva AI monitor for AI visibility?

Astiva AI monitors brand mentions across 10 AI platforms: ChatGPT, Claude, Google Gemini, Google AI Overviews, Google AI Mode, Perplexity, Grok, Meta AI, DeepSeek, and Mistral AI. For each tracked prompt, Astiva AI records mention rate, mention position, sentiment characterization, citation rate, and share of voice vs. named competitors. Astiva AI also runs citation gap analysis (identifying which competitor prompts your brand is missing from) and GA4 Revenue Attribution to tie AI-driven traffic to pipeline revenue.

For the technical implementation of GEO optimization, read GEO vs SEO: what changes and what stays the same. To understand how AI models evaluate and select brands at the signal level, see how to get mentioned by AI assistants. For step-by-step content restructuring including the full 25-Point GEO Content Framework and the zero-to-40% case study, read the complete LLM optimization guide. The AI citation audit: 7 red flags identifies the specific gaps most likely to be suppressing your current mention rate.

Detect, Diagnose, and Fix Your AI Visibility with Astiva

If your brand is not tracked across AI platforms, you are optimizing blindly, making content changes, schema updates, and reputation investments with no way to know whether AI models are responding. Astiva AI monitors brand mentions across 10 platforms: ChatGPT, Claude, Google Gemini, Google AI Overviews, Google AI Mode, Perplexity, Grok, Meta AI, DeepSeek, and Mistral AI. Mention rate, sentiment, share of voice, citation gaps, and GA4 Revenue Attribution. All in one dashboard, updated daily.

→ Check your brand's AI visibility for free. Results in 5 minutes. Or view pricing plans starting at $29/month.