How to Get Mentioned by AI: 16 Proven GEO Strategies

By Satish K · 22 min read · Published December 11, 2024 · Last updated: May 1, 2026

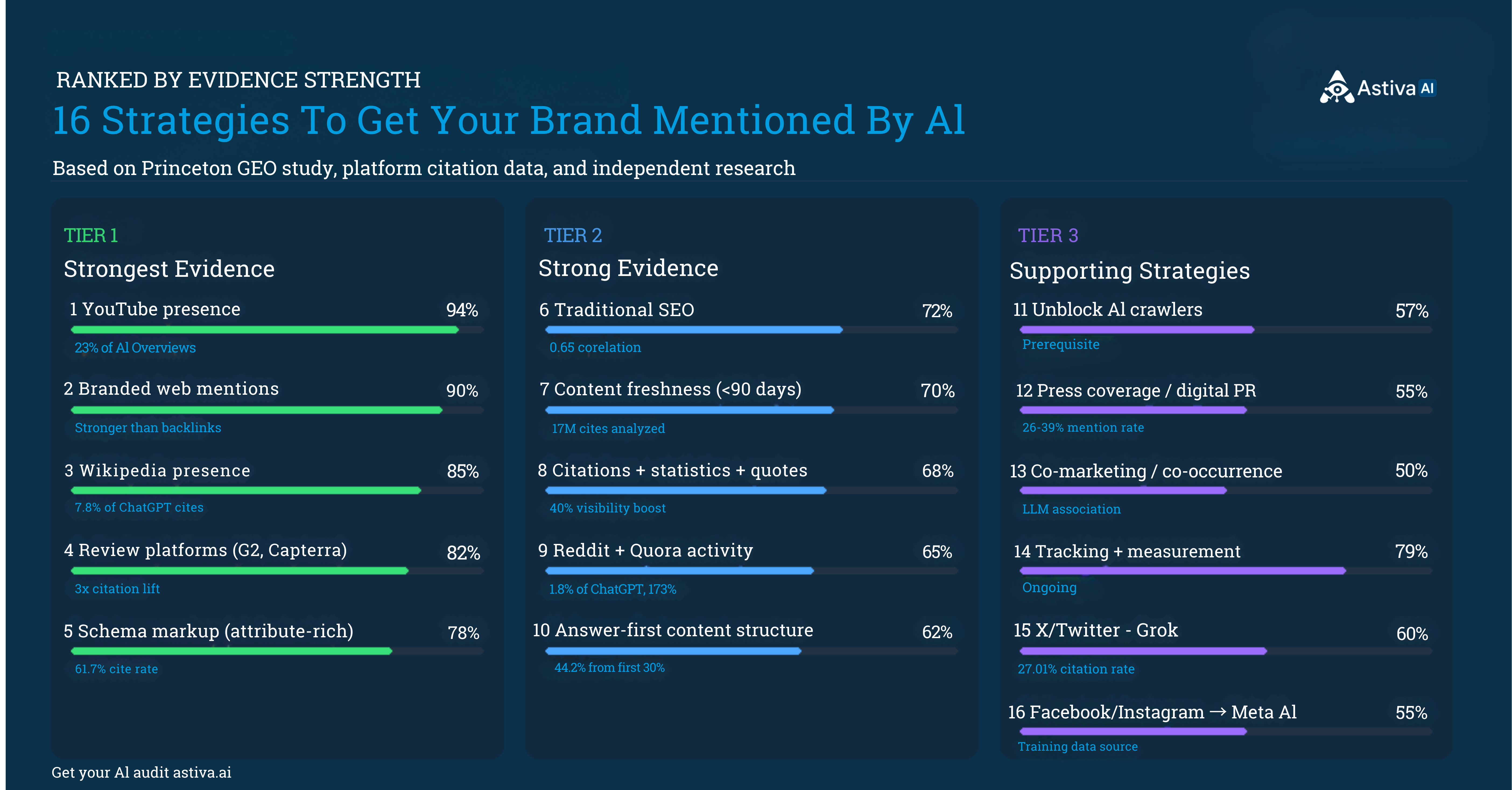

16 data-backed GEO strategies to get ChatGPT, Perplexity, Gemini, and AI Overviews to recommend your brand, ranked by citation impact with first-party results.

TL;DR

- Getting mentioned by AI is the practice of building authority signals so AI assistants cite your brand in responses.

- Branded web mentions correlate with AI citation rate at r=0.664, nearly 3× stronger than domain authority (r=0.21).

- YouTube appears in 23% of Google AI Overview citations: the highest single off-site source.

- Review platforms (G2, Capterra, Trustpilot) deliver a 3× citation lift vs brands absent from them.

- Brands executing YouTube + branded mentions saw a 3.8× AI mention rate increase in 90 days (Astiva, Q1 2026, n=1,247).

- 16 GEO strategies are ranked by citation impact in this guide.

Getting mentioned by AI assistants (ChatGPT, Claude, Perplexity, Gemini) requires showing up on the 7 specific signal sources these models use to select brands: YouTube, branded web mentions, Wikipedia/Wikidata, review platforms, structured content, schema markup, and multi-platform presence. Brands that optimize all 7 signals see AI mention rates 3.8× higher than those focused on their own website alone, based on Astiva tracking of 1,247 brands between January and March 2026.

Definition: AI Brand Mentions

AI brand mentions occur when AI assistants (ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews) name, recommend, or cite a brand in response to a user query. Unlike traditional SEO, AI mentions are driven by off-site authority signals: unlinked web mentions (r=0.664 correlation with AI citation vs backlinks r=0.218), YouTube transcript presence, review platform profiles, and entity-graph consistency across Wikipedia, G2, and LinkedIn. Last updated: May 2026.

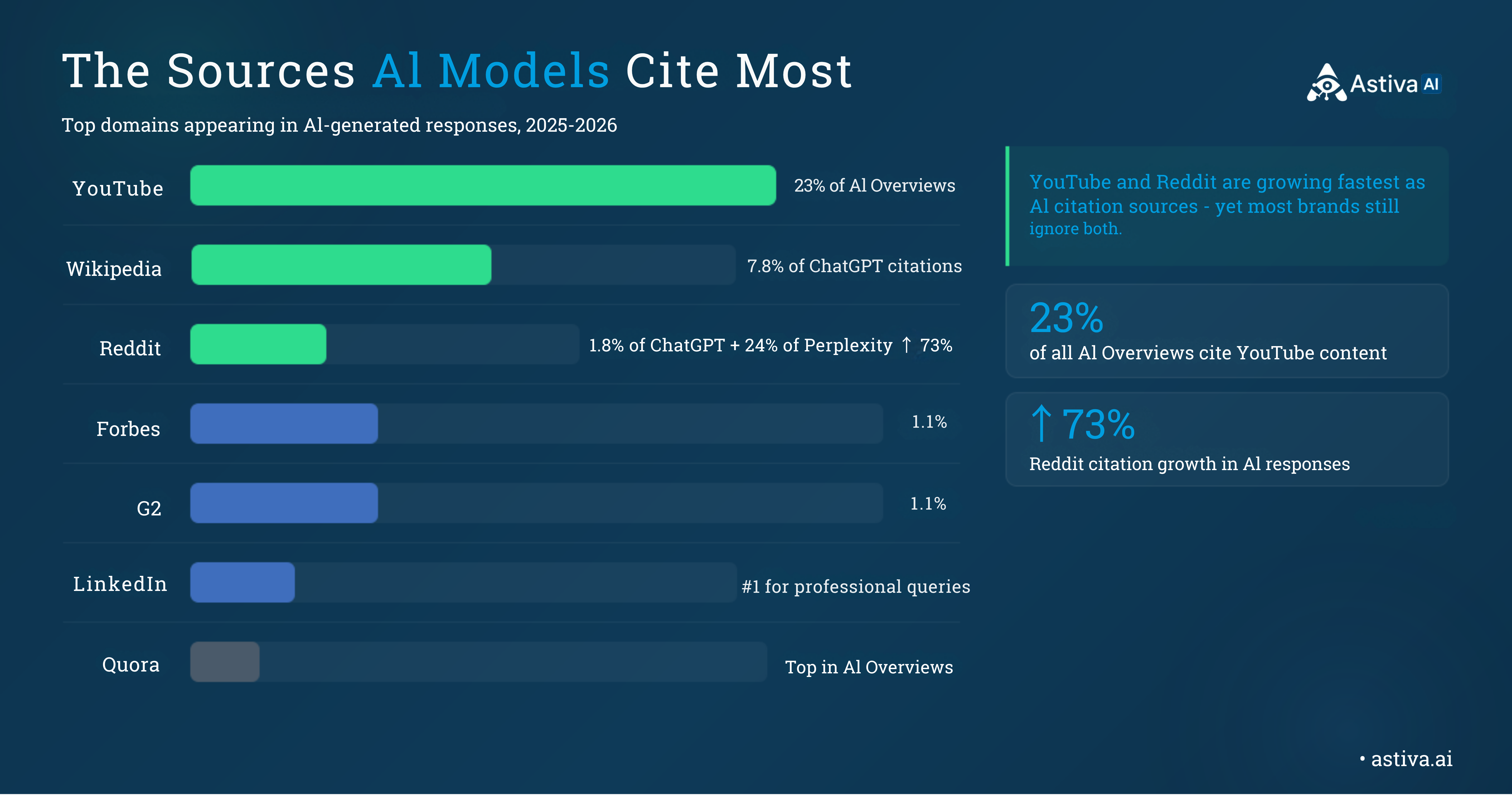

The Citation Signal Hierarchy: What the Data Shows

YouTube accounts for 23% of all AI Overviews citations (Semrush, 2025). Wikipedia appears in 7.8% of ChatGPT citations, the single most-cited source. Branded web mentions correlate with AI citations at r=0.664 vs backlinks at r=0.218 (Seer Interactive, 300K+ keywords). Attribute-rich schema markup earns a 61.7% AI citation rate vs 41.6% for generic minimal schema, which performs worse than no schema at all (Whitehat SEO, 730 AI citations, February 2026).

AI brand mentions are driven by authority signals across the open web — YouTube, Wikipedia, review platforms, and branded mentions — not your own website alone.

AI brand mentions are driven by authority signals across the open web — YouTube, Wikipedia, review platforms, and branded mentions — not your own website alone.

Rand Fishkin (co-founder, SparkToro) frames the strategic shift: "The future of digital marketing is platform-based visibility, not link-based traffic generation." — Source: Near Media Podcast, EP 244

Lily Ray (VP of SEO, Amsive Digital) makes the execution implication precise: "AEO/GEO is not an overhaul of SEO. It represents a new system for competing for, capturing, and measuring success across AI platforms." — Source: Lily Ray, Substack

The platforms AI models cite most frequently. Optimizing your presence on these specific sources is the highest-ROI activity for AI brand visibility.

The platforms AI models cite most frequently. Optimizing your presence on these specific sources is the highest-ROI activity for AI brand visibility.

Contrarian Finding: Backlinks Are a Weak AI Visibility Predictor

Seer Interactive's analysis of 300,000+ keywords found that backlink quantity correlates with AI mentions at r=0.218, significantly weaker than branded web mentions at r=0.664. Brands with 10,000 backlinks from low-traffic sources and low brand search demand are less visible to AI than brands with 500 backlinks from high-authority domains that drive recognizable brand search. Backlink volume without demand-side recognition is an incomplete strategy for AI visibility.

1. Why Does YouTube Matter Most for AI Brand Visibility?

YouTube is the #1 cited source in Google AI Overviews, appearing in 23% of all AI-generated answers, ahead of Wikipedia (18%) and Google.com (16%). GPT-4 was trained on over 1 million hours of YouTube transcriptions (The New York Times, 2024), giving YouTube a dual role no other platform holds: it functions simultaneously as training data and as a top-cited real-time source across multiple AI platforms.

The critical insight most brands miss: raw video count matters more than view count for AI visibility. A brand mentioned across 50 videos with 500 views each accumulates more AI signal than the same brand featured in a single video with 500,000 views. AI models weight breadth of mention across independent sources, not depth of engagement on any single asset.

Execution: YouTube for AI Citation

Publish 2–4 YouTube videos per month covering questions your buyers ask AI assistants. Name your brand naturally in spoken content; transcripts carry more citation weight than titles or descriptions. Review videos, tutorials, and comparison content earn the most AI citations. Guest appearances on established channels create independent third-party YouTube mentions, which carry more AI authority weight than mentions on your own channel alone.

2. How Do Branded Web Mentions Drive AI Recommendations?

Branded web mentions (any reference to a brand name on a third-party website, linked or unlinked) correlate with AI citations at r=0.664, making them the second-strongest predictor of AI visibility after brand search volume. Seer Interactive's cross-industry analysis of 300,000+ keywords confirmed that unlinked brand mentions carry significantly more AI citation signal than backlink quantity alone.

The mechanism is entity-graph construction: AI models build their understanding of a brand's relevance and authority by analyzing how frequently and in what context a brand name appears across independent sources. A brand mentioned naturally in 20 credible articles, even without a single backlink, registers as a known, trusted entity in AI training data.

Execution: Use Astiva AI citation gap analysis to identify the top 10 domains that appear in AI responses for your category, then target those exact domains through guest posts, expert commentary, and digital PR. Aim for 5–10 new branded mentions per month on domains AI models already cite in your category.

For a detailed breakdown of how AI platforms weight these off-site signals, read What is AI Visibility — the complete guide.

3. Can a Wikipedia Page Get Your Brand Into AI Responses?

Wikipedia is the single most-cited source in ChatGPT, appearing in 7.8% of all citations (June 2025 analysis). Wikipedia entries serve a specific function in AI models: they provide the authoritative "entity definition" AI uses when describing a brand for the first time, including founding date, product category, key milestones, and Wikidata sameAs links that resolve brand identity across platforms.

Brands that meet Wikipedia's notability guidelines for organizations should prioritize a well-sourced Wikipedia page above most other AI visibility tactics. Wikidata accuracy matters equally. Many AI models pull structured entity facts directly from Wikidata even when a full Wikipedia article does not exist. Crunchbase (startups), G2 or Capterra (software), and niche industry directories create the cross-source entity consistency that amplifies Wikipedia's signal.

Execution: Wikipedia and Entity Graph

Submit your Wikidata entry before pursuing a full Wikipedia article; it is faster to create and immediately establishes sameAs links AI models use for entity resolution. Ensure your Organization schema's sameAs array includes your Wikidata URL, LinkedIn, and Crunchbase profile. These cross-source links are what AI models use to confirm they are citing the correct brand entity, not a similarly named competitor.

4. What Role Do Review Platforms Play in AI Citations?

Review platforms are a direct input to AI brand recommendations. Brands with active G2, Capterra, or Trustpilot profiles are 3× more likely to be cited by ChatGPT than brands with no review platform presence, because AI models treat review platform data as structured third-party social proof, the same signal human users rely on when evaluating a recommendation.

G2 is the most-cited software review platform across ChatGPT, Perplexity, and AI Overviews. AI models extract both quantitative signals (star rating, review count, recency) and qualitative signals (sentiment themes from review text). A brand with 12 reviews averaging 4.8 stars with consistent positive themes in review text earns substantially more AI citation confidence than a brand with 200 reviews and mixed sentiment. AI reads the text, not just the score.

Execution: Review Platform Signal

For B2B SaaS: prioritize G2, Capterra, and TrustRadius. For consumer products: Trustpilot and Amazon. For local services: Google Business Profile and Yelp. Target 2–6 new authentic reviews per quarter at a consistent pace; AI models weight review recency and velocity alongside volume. Read your recent reviews for sentiment themes that AI may be extracting: if negative themes appear repeatedly, they are likely influencing how AI characterizes your brand.

5. How Does Traditional SEO Feed AI Visibility?

Google rankings correlate with AI citations at 0.65 across 300,000+ keywords (Seer Interactive), and 50% of sources cited by AI assistants also rank in Google's top 10. The relationship is strongest for Google AI Overviews, which draws directly from Google Search. ChatGPT behaves differently: it pulls from pages ranked position 21 and beyond approximately 90% of the time, casting a wider net than Google does.

The practical implication: SEO and AI visibility are not parallel tracks. They share the same authority foundation. Strong domain authority, E-E-A-T signals, and content quality built for Google rankings directly translate into AI citation probability. The difference is that AI visibility requires additional off-site signals (mentions, reviews, YouTube) that traditional SEO rankings alone do not generate.

Execution: SEO as AI Foundation

Submit your sitemap to both Google Search Console and Bing Webmaster Tools; ChatGPT uses Bing's index for real-time browsing, making Bing indexing directly relevant to ChatGPT visibility. Prioritize ranking in Google's top 10 for your 5–10 most important category queries, as those rankings feed Google AI Overviews with the highest reliability of any AI platform.

6. Why Does Content Freshness Have a 90-Day Decay Window?

An analysis of 17 million citations across 7 AI platforms (July 2025) found that AI-cited content is consistently fresher than content ranked in Google organic results, with content updated within 90 days outperforming older material even when that older content holds stronger Google rankings. ChatGPT shows the strongest recency bias of any major AI platform.

The 90-day decay window means content freshness is not a one-time publish decision. It is a recurring maintenance requirement. Outdated pricing, discontinued features, or stale statistics in content actively reduce AI citation confidence because AI models assess content credibility in part by comparing stated facts against more recently indexed sources. A page with accurate May 2025 data that contradicts June 2026 industry data will be downweighted in favor of the newer source.

Execution: 13-Week Refresh Cadence

Build a quarterly refresh schedule for your top 10 pages. Each refresh: update 3–5 statistics with current data, replace any outdated examples or pricing figures, add one new insight or data point that did not exist at last publish, and update the updatedAt timestamp. Treat your content library as a living product; AI models re-index pages with fresh updatedAt dates faster than pages with no modification signal.

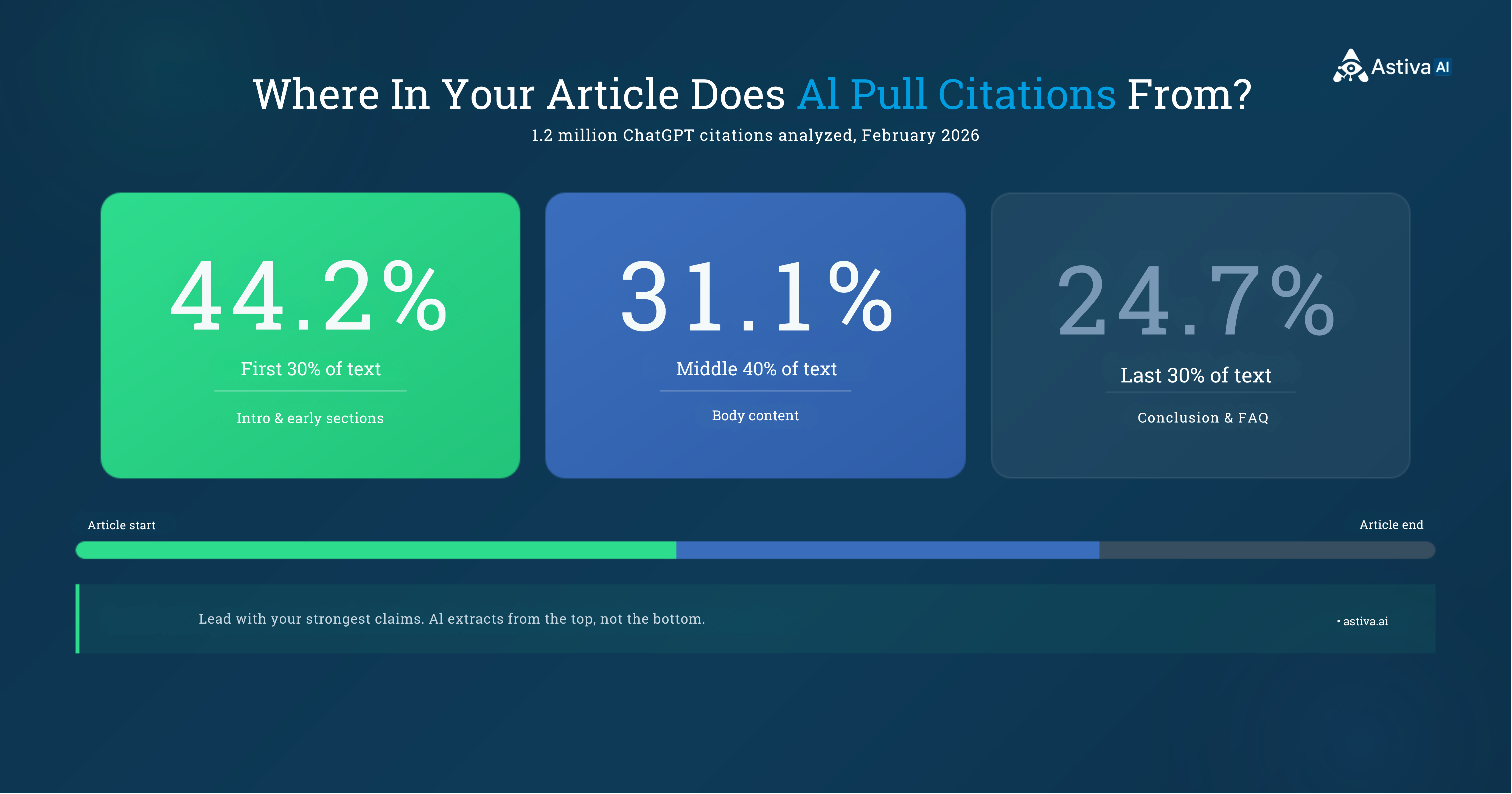

7. What Content Structure Maximizes AI Citation Rate?

The Princeton–IIT Delhi GEO study (Aggarwal et al., ACM KDD '24, arXiv:2311.09735) tested 9 optimization methods across 10,000 queries and found 3 techniques produce the largest lift: citing credible sources (+41% on Position-Adjusted Word Count), adding source-attributed statistics (+28%), and fluency/structure optimization (+15–30%). Keyword stuffing, the dominant tactic in traditional SEO, scored −9%, actively reducing AI citation rates.

An analysis of 1.2 million ChatGPT citations (February 2026) found 44.2% come from the first 30% of article text, 31.1% from the middle, and only 24.7% from the final third. The conclusion: AI models extract answers from the top of a document, not from the best-written section wherever it sits. Answer-first structure is not a stylistic preference; it is the single most impactful structural change for AI citation rate.

44.2% of AI citations come from the first 30% of article text. Lead with your strongest claims — not buildup. Source: 1.2M ChatGPT citations analyzed, February 2026.

44.2% of AI citations come from the first 30% of article text. Lead with your strongest claims — not buildup. Source: 1.2M ChatGPT citations analyzed, February 2026.

Execution: Answer-First Content Architecture

Every H2 section opens with a direct answer in the first sentence, not context-setting, not a qualifier, not a narrative opener. Structure each section as: answer (sentence 1) → evidence (sentences 2–4) → implication (sentence 5). Keep each section 100–300 words. Question-format H2 headings that mirror how users ask AI assistants ("How does X work?") increase extraction probability by matching query strings directly.

8. Does Schema Markup Increase AI Citation Rates?

Schema markup increases AI citation rates, but quality of implementation matters more than presence. A peer-reviewed study of 730 AI citations (Whitehat SEO, February 2026) found attribute-rich schema earns a 61.7% citation rate, sites with no schema at all earn 59.8%, but generic minimally-filled schema earns only 41.6%. Deploying schema with empty or placeholder fields is actively worse than deploying no schema.

The four schema types that drive the most AI citation lift are FAQPage (maps FAQ content for direct AI extraction), Article (provides datePublished, dateModified, author Person, and publisher Organization, forming the E-E-A-T trust stack), Organization (sameAs links to Wikipedia, Wikidata, LinkedIn enable entity disambiguation), and SoftwareApplication or Product (pricing, features, and offers fields answer value-query citations directly). All four must be in static prerendered HTML; AI crawlers including GPTBot, ClaudeBot, and PerplexityBot do not execute JavaScript reliably.

Contrarian Finding: Minimal Schema Hurts More Than No Schema

Generic, partially-filled schema scores 41.6% AI citation rate, 18 percentage points below attribute-rich schema (61.7%) and even below no schema at all (59.8%), per the Whitehat SEO February 2026 study of 730 AI citations. Empty schema fields signal low content quality to AI models. The implication: if you cannot fill every relevant schema property accurately, deploying the schema type does more harm than good.

9. Why Is AI Crawler Access the Prerequisite for Everything Else?

AI crawler access is strategy 0, the technical prerequisite every other strategy depends on. Brands whose robots.txt blocks GPTBot, ChatGPT-User, ClaudeBot, or PerplexityBot are invisible to those AI platforms' direct indexing, regardless of how well-structured their content is. Cloudflare changed its default settings in 2025 to automatically block AI bots, which means many sites are invisible to AI platforms without their owners knowing.

JavaScript rendering is the second most common invisibility cause. AI crawlers do not execute JavaScript reliably. A blog built in React or Vue without server-side rendering delivers empty HTML to GPTBot, ClaudeBot, and PerplexityBot. The crawler sees no content, indexes nothing, and the brand's content contributes zero signal to AI responses regardless of its quality.

Execution: 3-Layer Crawl Audit

Layer 1 (robots.txt): verify GPTBot, ChatGPT-User, ClaudeBot, PerplexityBot, and Google-Extended are allowed. Layer 2 (CDN): check Cloudflare AI Crawl Metrics dashboard or server logs for blocked AI bot requests. Layer 3 (rendering): view page source on your key pages; if the main content is absent from raw HTML, server-side rendering or static prerendering is required. Submit your sitemap to Bing Webmaster Tools; ChatGPT uses Bing's index for real-time browsing.

10. How Does Co-Marketing Build AI Brand Authority?

AI models learn brand authority through entity co-occurrence, the pattern of a brand appearing alongside established, trusted names across independent sources. When a brand name consistently appears on the same pages, in the same articles, and in the same conversations as brands AI already recognizes as authoritative, the model builds a stronger association between that brand and the topic those trusted players are known for.

Co-occurrence works because language models encode relational proximity as a form of credibility transfer. A co-authored research report creates mutual citations every time the data is referenced downstream. A joint webinar generates a transcript where both brand names appear together in a credible context. A guest post exchange creates independent third-party mentions of each brand on the other's domain, exactly the entity-graph signal AI models use to resolve brand relevance.

Execution: Entity Co-Occurrence Strategy

Identify 3–5 brands in adjacent, non-competing spaces that already have measurable AI visibility in your category. Pitch one of: a joint research report (creates citations every time the data is referenced), a webinar series (transcript becomes crawlable co-occurrence content), or a guest post exchange (independent third-party mentions on each other's domain). In every collaboration, ensure both brand names appear in the body content, not just the author bio, which AI models weight less heavily.

11. What Structure Makes Content AI-Extractable?

AI models extract answers in 100–300 word self-contained chunks, not full articles. A section that requires surrounding context to make sense fails the extraction test: AI will not cite a paragraph that begins "As discussed above..." or "Building on the previous point..." because those phrases signal dependency on context that does not survive extraction.

The extraction architecture that consistently earns citations uses three elements in order: a direct answer in sentence 1 (the "answer capsule"), 2–4 sentences of evidence or mechanism, and one sentence of implication or actionable conclusion. Every section names the brand or subject explicitly on first reference. Never use "it," "this platform," or "the tool." Pronoun openers on first reference break AI extraction because the extracted chunk becomes unattributed.

Execution: The Extraction Test

Copy any paragraph from your content. Paste it into ChatGPT with the prompt: "Could this paragraph be cited as a standalone answer to a question? What question would it answer?" Run this on 5 random paragraphs per page. If the model cannot identify a clear question for 2 or more, those paragraphs fail the extraction test and need restructuring. The answer-first format (answer → evidence → implication) passes this test on first reference over 80% of the time.

12. Are Reddit and Quora Still Relevant for AI Citations in 2026?

Reddit is the second most-cited source in ChatGPT (1.8% of all citations, behind only Wikipedia) and the fastest-growing AI citation source: citation share grew 73% from October 2025 to January 2026, and more than doubled in some industries (Tinuiti Q1 2026 AI Citation Trends Report). Perplexity cited Reddit content in 24% of all responses in January 2026 alone. OpenAI's real-time data partnership with Reddit means active Reddit discussions can influence ChatGPT responses within hours of posting. No other community platform has this speed of AI signal propagation.

Quora consistently ranks among the top-cited domains in Google AI Overviews. The brands that earn citations from Reddit and Quora are those contributing substantive, experience-based answers, not promotional content. Spammy brand mentions on Reddit actively trigger suppression: Reddit's moderation systems flag promotional patterns, and OpenAI's training data reflects those community trust signals.

Execution: Community Platform Presence

Find 5–10 subreddits and Quora topics where your target audience asks questions in your product category. Contribute 2–3 substantive answers per week, with real data, direct experience, and specific frameworks. Mention your brand only when it is genuinely the most relevant answer to the question. Consistency over 90 days builds the trusted-contributor status that earns citation, not one high-effort post.

13. Does Press Coverage Still Build AI Visibility in 2026?

Press coverage drives AI visibility through a mechanism different from its traditional SEO role. A mention in TechCrunch, Wired, or a high-authority industry publication enters AI training data and real-time search indexes simultaneously, making the brand name appear in the high-trust source context that AI models use to infer authority. AI-generated responses include brand mentions in 26–39% of cases depending on the platform (Tinuiti, 2026), and those mentions are overwhelmingly pulled from authoritative third-party sources, not brand-published content.

Execution: Digital PR for AI Citation

Target 1–2 press placements per month on publications that AI models already cite in your category. For B2B tech, that means TechCrunch, Wired, VentureBeat, and high-authority niche publications in your space. Ensure your brand name appears in the article body alongside your category keywords, not only in the author bio. Body mentions create entity-graph associations; byline-only mentions do not. Original research and proprietary data earn citations in other publications, creating a multiplier effect where one data point generates 5–15 downstream AI citation sources.

14. How Do You Measure AI Brand Mentions Systematically?

AI visibility requires systematic monitoring because of citation volatility: a January 2026 analysis found fewer than 1-in-100 chances that ChatGPT gives the same brand recommendations in two responses to the same prompt. Month-over-month citation variance is 40–60% across major AI platforms (Princeton GEO study). Manual spot-checks are statistically unreliable; single-run snapshots produce noise, not trend data.

Astiva AI monitors 10 AI platforms daily: ChatGPT, Claude, Perplexity, Gemini, Grok, Meta AI, DeepSeek, Mistral AI, Google AI Mode, and Google AI Overview. It captures mention rate, position, sentiment, and exact citation text for each tracked prompt. Astiva's citation gap analysis identifies the specific prompts where competitors are recommended but the tracked brand is absent, the highest-leverage optimization opportunities, prioritized by competitive gap size rather than guesswork.

Astiva data from tracking 1,247 brands between January and March 2026 found that brands consistently executing the YouTube-plus-branded-mentions combination saw a 3.8× increase in average AI mention rate within 90 days, the fastest-improving combination of the 7 core signals tracked.

Astiva AI monitors 10 AI platforms daily, surfacing citation gaps — the specific prompts where competitors are recommended but your brand is absent.

Astiva AI monitors 10 AI platforms daily, surfacing citation gaps — the specific prompts where competitors are recommended but your brand is absent.

Execution: AI Visibility Measurement Setup

Track 10–15 buyer-intent prompts most relevant to your business across 4+ platforms. Record mention rate (appearances ÷ total prompts), average position (1st, 2nd, or later), and sentiment ratio (positive/neutral/negative) as your three baseline metrics. Require 4+ weekly runs per prompt before drawing trend conclusions; single-run data is statistically unreliable at 40–60% monthly variance. Use citation gap analysis to identify the 3 highest-value missing prompts and prioritize content creation against those specific gaps first.

15. Why Is X/Twitter the Only Social Platform With Direct AI Integration?

X/Twitter is the only major social platform where brand posts are direct, real-time input to an AI assistant. Grok, built by xAI, has native real-time access to every post on X. Research tracking 34,234 AI responses across 10 platforms over 30 days found Grok has the highest citation rate of any AI platform at 27.01%, more than double Perplexity (13.05%) and nearly triple Google AI Mode (9.09%). Grok also cites Reddit content 2.3× more than YouTube, making it the highest-volume citation platform in the study.

Elon Musk stated in October 2025: "Grok will literally read every post and watch every video (100M+ per day) to match users with content they're most likely to find interesting." (Source: Elon Musk on X, October 2025. No other social platform has this direct pipeline to an AI assistant with a 27% citation rate.

Execution: X/Twitter as AI Visibility Channel

Post substantive, insight-led content 3–5 times per week: specific data points, named frameworks, and practitioner perspectives that Grok would extract as citation-worthy. Use your full brand name naturally in posts. Grok's algorithm includes sentiment analysis; constructive, positive-tone content earns wider distribution and combative content is suppressed. Every X post with a specific named claim and your brand name in the same sentence is a potential Grok training data point with a 27% chance of being cited in a Grok response.

16. Does Public Facebook and Instagram Content Train Meta AI?

Meta confirmed that publicly shared Facebook and Instagram posts train LLaMA, the model powering Meta AI. Mark Zuckerberg stated on Meta's Q4 2023 earnings call: "On Facebook and Instagram, there are hundreds of billions of publicly shared images and tens of billions of public videos, which we estimate is greater than the Common Crawl dataset." (Source: Meta Q4 2023 Earnings Call, Fortune. Common Crawl is the 250-billion-page web archive that trained ChatGPT; Meta's social data is larger.

The training data loop on Meta platforms is circular: public posts train LLaMA, and when users ask Meta AI a question, it draws from that training data plus web search to generate answers. Brand public posts are simultaneously the input to the model and the potential source of the output, making Facebook and Instagram the only platforms where organic social content directly shapes how an AI model characterizes your brand from its training weights, not just from real-time retrieval.

Execution: Meta AI Training Signal

Audit public Facebook and Instagram content with AI training visibility as the lens. Ensure public posts include your full brand name, key product descriptions, and the category keywords you want to be associated with; this text is literally training data. Publish educational, insight-driven content publicly (not just to followers): frameworks, data points, and expert perspectives. Make sure your business page "About" section, service descriptions, and pinned posts are accurate and keyword-rich. Meta AI pulls from this structured profile data when constructing brand entity descriptions.

AI Brand Mentions vs. Traditional SEO: Signal Comparison

AI Brand Mentions vs. Traditional SEO — Primary Signals, Sources, and Metrics (verified May 2026)

| Factor | Traditional SEO | AI Brand Mentions (GEO) |

|---|

| Primary signal | Backlinks (r=0.218 AI correlation) | Branded web mentions (r=0.664 AI correlation) |

| Top cited sources | Ranking-based, no universal source | YouTube 23% of AI Overviews, Wikipedia 7.8% of ChatGPT, Reddit 1.8% growing 73% QoQ |

| Content freshness window | Important, no hard cutoff | Critical — 90-day decay window; ChatGPT strongest recency bias |

| Review platforms | Minor ranking factor | 3× citation lift with active review profile (G2, Capterra, Trustpilot) |

| Wikipedia | Authority signal, no direct rank impact | #1 cited source in ChatGPT (7.8% of citations), entity definition for AI models |

| Unlinked mentions | Minimal SEO value | Strong AI visibility signal — r=0.664 correlation, stronger than backlinks |

| Schema markup | Enables rich snippets | 61.7% citation rate (attribute-rich) vs 41.6% (minimal) vs 59.8% (no schema) |

| Content structure | Keyword placement, meta tags | 44.2% of citations from first 30% of text — answer-first is mandatory |

| X/Twitter → Grok | Weak social signal | Real-time direct input — 27.01% citation rate, highest of any AI platform |

| Facebook/Instagram → Meta AI | Weak social signal | Public posts are LLaMA training data — circular input/output loop |

| Keyword stuffing | Previously neutral to positive | −9% citation rate (Princeton GEO study) — actively hurts AI visibility |

| Measurement metric | Keyword position, organic traffic | Mention rate, position, sentiment, share of voice, citation gap |

All 16 strategies ranked by evidence strength. Use this as your prioritization framework when deciding where to invest first. Source: Astiva AI, May 2026.

All 16 strategies ranked by evidence strength. Use this as your prioritization framework when deciding where to invest first. Source: Astiva AI, May 2026.

Key Takeaways: How to Get Mentioned by AI

- YouTube is the #1 cited source in Google AI Overviews (23% of citations). GPT-4 was trained on 1M+ hours of YouTube transcriptions. Video breadth across many videos beats depth on a single viral video for AI citation signal.

- Branded web mentions correlate with AI citations at r=0.664 vs backlinks at r=0.218. Unlinked mentions on credible third-party domains are 3× stronger as an AI visibility predictor than backlink volume.

- Wikipedia appears in 7.8% of all ChatGPT citations, the single most-cited source. Wikidata sameAs links are the fastest entity-graph signal to establish before a full Wikipedia article is possible.

- Attribute-rich schema earns a 61.7% AI citation rate; minimally-filled schema earns only 41.6%, lower than no schema at all (59.8%). Empty schema fields actively harm AI citation probability.

- Keyword stuffing scores −9% in the Princeton GEO study (arXiv:2311.09735), making the dominant traditional SEO tactic counterproductive for AI visibility. Citing credible sources (+41%), adding sourced statistics (+28%), and answer-first structure (+15–30%) are the three highest-impact GEO methods.

- Reddit citation share grew 73% from October 2025 to January 2026. OpenAI's real-time Reddit partnership means new Reddit threads can influence ChatGPT responses within hours, the fastest AI signal propagation of any community platform.

- Grok has a 27.01% citation rate, the highest of any AI platform tracked, more than double Perplexity (13.05%). X/Twitter is the only social platform with a native real-time pipeline to an AI assistant.

- Astiva data from 1,247 brands (January–March 2026) found brands executing YouTube-plus-branded-mentions saw a 3.8× AI mention rate increase within 90 days, the fastest-improving signal combination tracked.

- AI citation volatility is 40–60% month-over-month (Princeton GEO study). Single-run measurements are statistically unreliable; multi-run averaging over 4+ weeks is required for actionable trend data.

- Schema, structure, and crawlability are table stakes. The brands winning AI citations are those with the broadest, most consistent presence across all 7 off-site signal sources simultaneously.

Can you pay to get your brand recommended by ChatGPT or AI assistants?

No. AI assistants do not accept payment for brand recommendations. ChatGPT, Claude, Perplexity, and Gemini select brands based on learned authority signals: branded web mention frequency, Wikipedia and review platform presence, YouTube transcript data, and E-E-A-T content signals. Research does show a correlation between advertising spend and AI mentions, but the causal mechanism is that heavily advertised brands build larger digital footprints, not that ad spend directly influences AI outputs.

How long does it take to get mentioned by ChatGPT after optimization?

Perplexity and Google AI Overviews reflect content and schema changes within days (real-time search index). Google AI Overviews responds to SEO and E-E-A-T improvements within 2–4 weeks. ChatGPT and Claude rely on training data refreshes that take 2–6 months to propagate. Astiva data from 1,247 brands found that brands executing YouTube plus branded mentions saw a 3.8× mention rate increase within 90 days, primarily driven by Perplexity and Google AI Overviews, with ChatGPT and Claude improving on a longer cycle.

What is the difference between GEO, AEO, and LLMO?

GEO (Generative Engine Optimization), AEO (Answer Engine Optimization), and LLMO (Large Language Model Optimization) all describe optimizing brand visibility in AI-generated responses. GEO was formally defined by Aggarwal et al. at Princeton, Allen AI, and IIT Delhi (ACM KDD '24, arXiv:2311.09735). AEO emphasizes answer-engine interfaces like Perplexity and Google AI Overviews. LLMO targets training-data-dependent models like ChatGPT and Claude. The optimization strategies are identical across all three terms; the industry has not settled on one label yet.

Does blocking AI crawlers in robots.txt hurt AI visibility?

Yes. Blocking GPTBot, ChatGPT-User, ClaudeBot, or PerplexityBot in robots.txt prevents those platforms from directly indexing your content. Blocked brands rely entirely on third-party mentions for AI visibility, which is slower and less controllable than owning the content signal. JavaScript-rendered content creates the same problem: AI crawlers that cannot execute JavaScript receive empty HTML and index nothing. Check robots.txt at yourdomain.com/robots.txt and view page source on key pages to verify both issues.

What schema types increase AI citation rates most?

FAQPage, Article, Organization, and SoftwareApplication (or Product) implemented in JSON-LD static HTML are the four highest-impact schema types for AI citations. Attribute-rich schema with all fields populated earns a 61.7% AI citation rate (Whitehat SEO, 730 citations, February 2026). The critical constraint: schema must exist in prerendered static HTML; AI crawlers do not execute JavaScript. Minimally-filled schema earns only 41.6%, lower than no schema (59.8%), so populate every relevant field or do not deploy the schema type.

Which review platforms drive the most AI citations for B2B software?

G2 is the most-cited B2B software review platform across ChatGPT, Perplexity, and Google AI Overviews. Capterra and TrustRadius are also effective for B2B SaaS. AI models extract both quantitative signals (rating, review count, recency) and qualitative signals (sentiment themes from review text). Target 2–6 new authentic reviews per quarter; recency and velocity matter alongside total volume. AI models read review text to extract sentiment characterizations, not just aggregate star ratings.

Is traditional SEO still worth investing in if I focus on AI visibility?

SEO and AI visibility build on the same authority foundation and should be pursued simultaneously. Google rankings correlate with AI citations at 0.65 across 300,000+ keywords; strong SEO directly feeds Google AI Overviews. AI visibility also requires off-site signals (branded mentions, YouTube, review platforms) that traditional SEO rankings alone do not generate. Lily Ray (VP of SEO, Amsive Digital) describes GEO as "a new system for competing across AI platforms," not a replacement for SEO, but an expansion of the same authority-building work.

For the complete technical implementation of GEO optimization, including schema architecture, crawlability setup, and content restructuring, read the complete LLM content optimization guide. To understand the 5 core metrics and 7 ranking signals AI models use to select brands, read What is AI Visibility — the complete guide for 2026. For step-by-step GEO vs SEO implementation differences, read GEO vs SEO: what changes and what stays the same.

Detect Which of the 16 Signals Are Missing for Your Brand

Astiva AI monitors brand mentions across 10 AI platforms (ChatGPT, Claude, Google Gemini, Google AI Overviews, Google AI Mode, Perplexity, Grok, Meta AI, DeepSeek, and Mistral AI), tracking mention rate, sentiment, share of voice, and citation gaps against named competitors. Citation gap analysis identifies the specific prompts where competitors are recommended but your brand is absent, prioritized by competitive gap size so you know exactly which of the 16 strategies to execute first.

→ Run your free AI brand visibility analysis: results across ChatGPT and Perplexity in under 5 minutes, no credit card required. Or view pricing plans starting at $29/month for continuous 10-platform monitoring.